前言

本文的文字及图片来源于网络,仅供学习、交流使用,不具有任何商业用途,版权归原作者所有,如有问题请及时联系我们以作处理。

作者:Python大数据分析

PS:如有需要Python学习资料的小伙伴可以加点击下方链接自行获取

项目分析

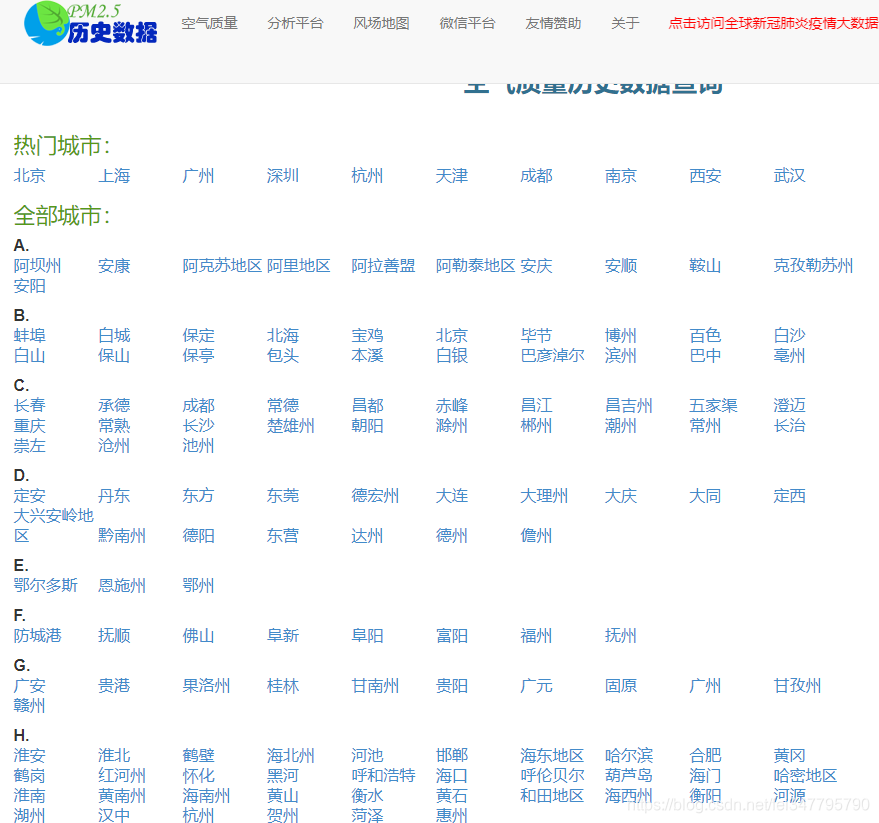

爬取天气网城市的信息

url : https://www.aqistudy.cn/historydata/

爬取主要的信息: 热门城市每一天的空气质量信息

点击月份还有爬取每天的空气质量信息

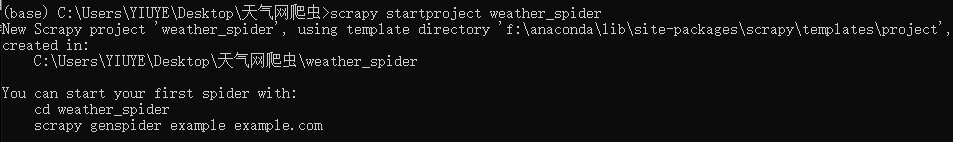

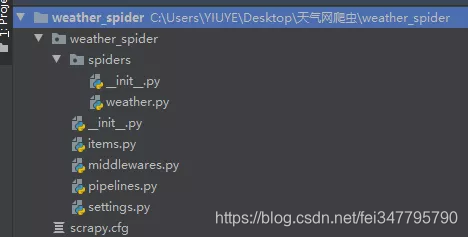

新建项目

-

新建文件夹命令为天气网爬虫

-

cd到根目录,打开cmd,运行scrapy startproject weather_spider

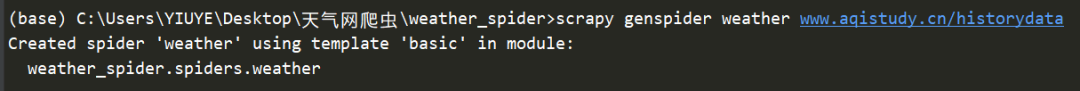

- 创建spider

cd到根目录,运行scrapy genspider weather www.aqistudy.cn/historydata

这里的weather是spider的名字

- 创建的路径如下:

代码编写

对于scrapy,第一步,必须编写item.py,明确爬取的对象

- item.py

import scrapy

class WeatherSpiderItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field()

"""日期 AQI 质量等级 PM2.5 PM10 SO2 CO NO2 O3_8h"""

city = scrapy.Field()

date = scrapy.Field()

aqi = scrapy.Field()

level = scrapy.Field()

pm25 = scrapy.Field()

pm10 = scrapy.Field()

so2 = scrapy.Field()

co = scrapy.Field()

no2 = scrapy.Field()

o3_8h = scrapy.Field()

对于爬取必须伪装好UA,在setting.py中定义MY_USER_AGENT来存放UA,注意在settings中命名必须大写

- settings.py

MY_USER_AGENT = [

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; AcooBrowser; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.0; Acoo Browser; SLCC1; .NET CLR 2.0.50727; Media Center PC 5.0; .NET CLR 3.0.04506)",

"Mozilla/4.0 (compatible; MSIE 7.0; AOL 9.5; AOLBuild 4337.35; Windows NT 5.1; .NET CLR 1.1.4322; .NET CLR 2.0.50727)",

"Mozilla/5.0 (Windows; U; MSIE 9.0; Windows NT 9.0; en-US)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; Win64; x64; Trident/5.0; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 2.0.50727; Media Center PC 6.0)",

"Mozilla/5.0 (compatible; MSIE 8.0; Windows NT 6.0; Trident/4.0; WOW64; Trident/4.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; .NET CLR 1.0.3705; .NET CLR 1.1.4322)",

"Mozilla/4.0 (compatible; MSIE 7.0b; Windows NT 5.2; .NET CLR 1.1.4322; .NET CLR 2.0.50727; InfoPath.2; .NET CLR 3.0.04506.30)",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN) AppleWebKit/523.15 (KHTML, like Gecko, Safari/419.3) Arora/0.3 (Change: 287 c9dfb30)",

"Mozilla/5.0 (X11; U; Linux; en-US) AppleWebKit/527+ (KHTML, like Gecko, Safari/419.3) Arora/0.6",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; en-US; rv:1.8.1.2pre) Gecko/20070215 K-Ninja/2.1.1",

"Mozilla/5.0 (Windows; U; Windows NT 5.1; zh-CN; rv:1.9) Gecko/20080705 Firefox/3.0 Kapiko/3.0",

"Mozilla/5.0 (X11; Linux i686; U;) Gecko/20070322 Kazehakase/0.4.5",

"Mozilla/5.0 (X11; U; Linux i686; en-US; rv:1.9.0.8) Gecko Fedora/1.9.0.8-1.fc10 Kazehakase/0.5.6",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.56 Safari/535.11",

"Mozilla/5.0 (Macintosh; Intel Mac OS X 10_7_3) AppleWebKit/535.20 (KHTML, like Gecko) Chrome/19.0.1036.7 Safari/535.20",

"Opera/9.80 (Macintosh; Intel Mac OS X 10.6.8; U; fr) Presto/2.9.168 Version/11.52",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/536.11 (KHTML, like Gecko) Chrome/20.0.1132.11 TaoBrowser/2.0 Safari/536.11",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.71 Safari/537.1 LBBROWSER",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E; LBBROWSER)",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/535.11 (KHTML, like Gecko) Chrome/17.0.963.84 Safari/535.11 LBBROWSER",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (compatible; MSIE 9.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E; QQBrowser/7.0.3698.400)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 5.1; Trident/4.0; SV1; QQDownload 732; .NET4.0C; .NET4.0E; 360SE)",

"Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; QQDownload 732; .NET4.0C; .NET4.0E)",

"Mozilla/4.0 (compatible; MSIE 7.0; Windows NT 6.1; WOW64; Trident/5.0; SLCC2; .NET CLR 2.0.50727; .NET CLR 3.5.30729; .NET CLR 3.0.30729; Media Center PC 6.0; .NET4.0C; .NET4.0E)",

"Mozilla/5.0 (Windows NT 5.1) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.1 (KHTML, like Gecko) Chrome/21.0.1180.89 Safari/537.1",

"Mozilla/5.0 (iPad; U; CPU OS 4_2_1 like Mac OS X; zh-cn) AppleWebKit/533.17.9 (KHTML, like Gecko) Version/5.0.2 Mobile/8C148 Safari/6533.18.5",

"Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:2.0b13pre) Gecko/20110307 Firefox/4.0b13pre",

"Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:16.0) Gecko/20100101 Firefox/16.0",

"Mozilla/5.0 (Windows NT 6.1; WOW64) AppleWebKit/537.11 (KHTML, like Gecko) Chrome/23.0.1271.64 Safari/537.11",

"Mozilla/5.0 (X11; U; Linux x86_64; zh-CN; rv:1.9.2.10) Gecko/20100922 Ubuntu/10.10 (maverick) Firefox/3.6.10",

"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/58.0.3029.110 Safari/537.36",

]

在定义好UA后,在middlewares.py中创建RandomUserAgentMiddleware类

- middlewares.py

import random

class RandomUserAgentMiddleware(object):

def __init__(self, user_agents):

self.user_agents = user_agents

@classmethod

def from_crawler(cls, crawler):

# 从settings.py中导入MY_USER_AGENT

s = cls(user_agents=crawler.settings.get('MY_USER_AGENT'))

return s

def process_request(self, request, spider):

agent = random.choice(self.user_agents)

request.headers['User-Agent'] = agent

return None

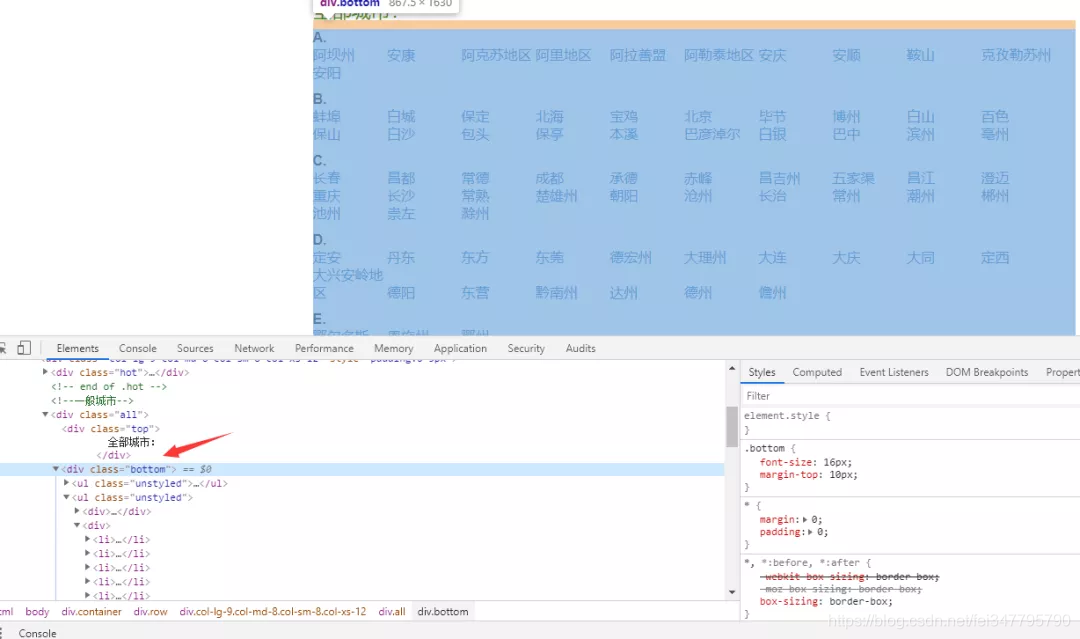

开始编写最重要的spider.py,推荐使用scrapy.shell来一步一步调试

- 先拿到所有的城市

在scrapy中xpath方法和lxml中的xpath语法一样

我们可以看出url中缺少前面的部分,follow方法可以自动拼接url,通过meta方法来传递需要保存的city名字,通过callback方法来调度将下一个爬取的URL

- weather.py

def parse(self, response):

city_urls = response.xpath('//div[@class="all"]/div[@class="bottom"]//li/a/@href').extract()[16:17]

city_names = response.xpath('//div[@class="all"]/div[@class="bottom"]//li/a/text()').extract()[16:17]

self.logger.info('正在爬去{}城市url'.format(city_names[0]))

for city_url, city_name in zip(city_urls, city_names):

# 用的follow快捷方式,可以自动拼接url

yield response.follow(url=city_url, meta={'city': city_name}, callback=self.parse_month)

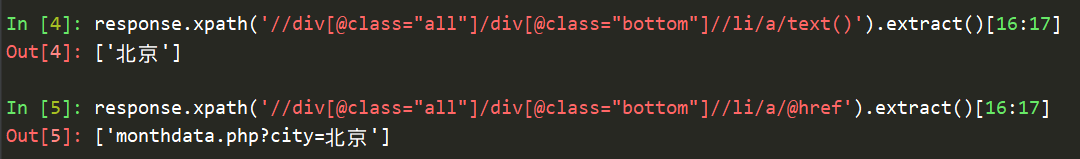

这时就是定义parse_month函数,首先分析月份的详情页,拿到月份的url

还是在scrapy.shell 中一步一步调试

通过follow方法拼接url,meta来传递city_name要保存的城市名字,selenium:True先不管

然后通过callback方法来调度将下一个爬取的URL,即就是天的爬取详细页

- weather.py

def parse_month(self, response):

"""

解析月份的url

:param response:

:return:

"""

city_name = response.meta['city']

self.logger.info('正在爬取{}城市的月份url'.format(city_name[0]))

# 由于爬取的信息太大了,所有先爬取前5个

month_urls = response.xpath('//ul[@class="unstyled1"]/li/a/@href').extract()[0:5]

for month_url in month_urls:

yield response.follow(url=month_url, meta={'city': city_name, 'selenium': True}, callback=self.parse_day_data)

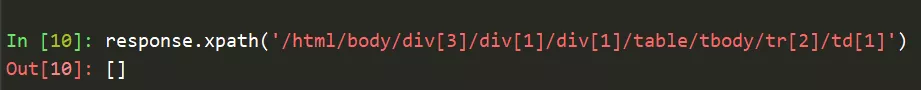

现在将日的详细页的信息通过xpah来取出

发现竟然为空

同时发现了源代码没有该信息

说明了是通过js生成的数据,scrapy只能爬静态的信息,所以引出的scrapy对接selenium的知识点,所以上面meta传递的参数就是告诉scrapy使用selenium来爬取。

复写WeatherSpiderDownloaderMiddleware下载中间件中的process_request函数方法

- middlewares.py

import time

import scrapy

from selenium import webdriver

from selenium.webdriver.chrome.options import Options

class WeatherSpiderDownloaderMiddleware(object):

def process_request(self, request, spider):

if request.meta.get('selenium'):

# 为了让浏览器能够无界面的工作

chrome_options = Options()

# 设置chrome浏览器无界面模式

chrome_options.add_argument('--headless')

driver = webdriver.Chrome(chrome_options=chrome_options)

# 用浏览器去访问这个地址

driver.get(request.url)

time.sleep(1.5) # 因为浏览器需要加载渲染

html = driver.page_source

driver.quit()

return scrapy.http.HtmlResponse(url=request.url, body=html, encoding='utf-8', request=request)

return None

激活WeatherSpiderDownloaderMiddleware

DOWNLOADER_MIDDLEWARES = {

'weather_spider.middlewares.WeatherSpiderDownloaderMiddleware': 543,

'weather_spider.middlewares.RandomUserAgentMiddleware':900,

}

最后编写weather.py中的剩下代码

from ..items import WeatherSpiderItem

def parse_day_data(self, response):

"""

解析每天的数据

:param response:

:return:

"""

node_list = response.xpath('//tr')

# 去掉表头

node_list.pop(0)

print(response.body)

print('开始爬取……')

print(node_list)

for node in node_list:

item = WeatherSpiderItem

item['city'] = response.meta['city']

item['date'] = node.xpath('./td[1]/text()').extract_first()

item['aqi'] = node.xpath('./td[2]/text()').extract_first()

item['level'] = node.xpath('./td[3]//text()').extract_first()

item['pm25'] = node.xpath('./td[4]/text()').extract_first()

item['pm10'] = node.xpath('./td[5]/text()').extract_first()

item['so2'] = node.xpath('./td[6]/text()').extract_first()

item['co'] = node.xpath('./td[7]/text()').extract_first()

item['no2'] = node.xpath('./td[8]/text()').extract_first()

item['o3_8h'] = node.xpath('./td[9]/text()').extract_first()

yield item

入库操作

这里入的库是Mongodb,在settings.py中配置

MONGO_URI='192.168.96.128' #虚拟机ip

MONGO_DB='weather' #表名

对于入门主要处理的是pipelines中

- pipelines.py

import pymongo

class MongoPipeline(object):

def __init__(self,mongo_uri,mongo_db):

self.mongo_uri=mongo_uri

self.mongo_db=mongo_db

@classmethod

def from_crawler(cls, crawler):

return cls(

mongo_uri=crawler.settings.get('MONGO_URI'),

mongo_db=crawler.settings.get('MONGO_DB')

)

def open_spider(self, spider): # 当爬虫开启时连接MongoDB数据库

self.client = pymongo.MongoClient(self.mongo_uri)

self.db = self.client[self.mongo_db]

def process_item(self, item, spider):

name = item.__class__.__name__

self.db[name].insert(dict(item)) # 保存数据

return item

def close_spider(self, spider): # 当爬虫关闭时关闭数据库连接

self.client.close()

在settings中激活pipelines

ITEM_PIPELINES = {

'weather_spider.pipelines.MongoPipeline': 300,

}

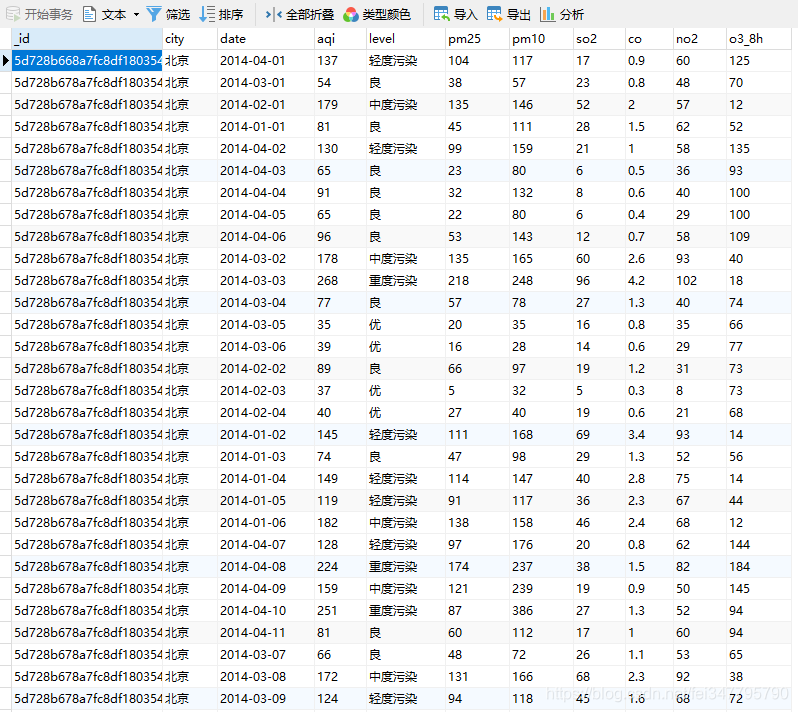

效果如下